FORTUNE 100 Retailer

Executive Summary

A Fortune 100 Retailer was looking to move their sizable custom business intelligence application with close to 40 million application queries executed per week to cloud-native Microsoft Azure SQL Data Warehouse. Their own testing and POCs had found that rewriting the entire application stack for the new cloud data warehouse would be a multi-year project with costs running in the tens-of-millions of dollars. Datometry was able to demonstrate that using its flagship product Datometry® Hyper-Q™, the customer could migrate to the new data warehouse in 12 weeks.

Challenges

The Fortune 100 Retailer was dealing with the following challenges in the migration of its custom business intelligence applications:

- Project to migrate to cloud-native Azure SQL DW for competitive business advantage would take several years and cost tens-of-millions of dollars.

- Critical business-facing database workloads needed to be consolidated prior to the migration.

- CAPEX and OPEX for its current aging data warehouse, Teradata, were far too high.

Datometry’s Solution

- Datometry Hyper-Q functionality allowed the customer to transfer their stored procedures immediately—without re-writing them— to the modern Azure SQL DW in twelve weeks.

- The deployment phase, including the hardening phase, was reduced from several years to just four months.

Why the Data Architect Chose Datometry

Fast Deployment & Simple Implementation

Hyper-Q can be deployed instantly and requires a testing phase of weeks, not months. The software does not require tuning and provides complete visibility into its operations.

High Concurrency & Workload Management Capabilities

Hyper-Q supports high concurrency and workload management capabilities in mixed workloads.

Support for Custom Business Applications

Hyper-Q instantly and natively supports mission-critical, custom, retail BI applications without going through an expensive application rewrite project.

Why the Business Chose Datometry

Reduce Cost of Ownership

The customer was able to leave their expensive, long-standing data warehouse for a more economical data warehouse, cutting CAPEX and OPEX by up to 80%.

Preserve Business Investment

Hyper-Q allowed the customer to preserve their long-standing investments in custom, mission-critical business logic because the Datometry solution does not require the rewriting of applications.

Accelerated Time to Value

Using Datometry Hyper-Q, the customer was able to adopt a modern and cost-effective enterprise data management strategy in weeks, rather than years.

Decreased Risk

Hyper-Q leaves existing applications unchanged which means data warehouse replatforming projects can be fully tested in advance.

How Datometry Hyper-Q Adaptive Data Virtualization Technology Works

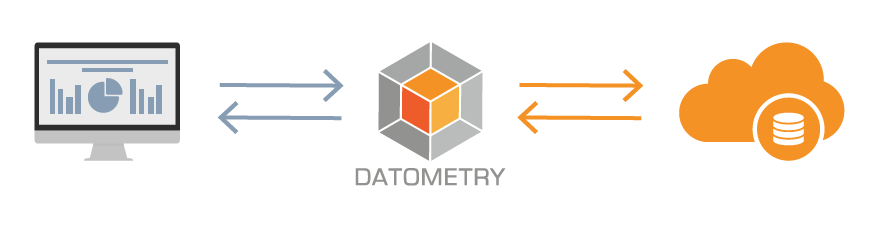

Datometry’s first-of-its-kind Adaptive Data Virtualization™ technology enables enterprises to run instantly and manage applications in cloud databases or data warehouses, within multiple databases or data warehouses, in the cloud, or between different cloud platforms. What this means for the enterprise is that business applications do not need to be rewritten or reconfigured, but can directly talk to Datometry Hyper-Q as if it were the original database or data warehouse. For example, applications originally developed for Teradata can now run transparently on Microsoft Azure SQL DW, Amazon Redshift, Pivotal Greenplum, and other data warehouses.

Learn more about product features, platform overview, and read answers to many frequently asked questions at the Hyper-Q product page.

Sponsored by Datometry