On Big Data Benchmarking. Q&A with Richard Stevens

Q1. You have recently completed the DataBench Project. What was the aim of the project?

There is extensive information on how and why to use technical benchmarking to assess specific management and analytics processes, but companies lack objective, evidence-based methods and tools to measure those elements that can demonstrate a correlation between technology benchmarks and business benchmarks and assess the impact these technologies have on the competitiveness and market success of organizations. This was the principal aim of DataBench.

Q2. Database benchmarks have been around for many years. What is it different with Big Data Technology (BDT) benchmarks?

Benchmarking has existed for a long time and no single benchmark has been specifically designed to measure the efficacy of bigdata analytics. Organizations need to assess networking, databases, algorithmic efficiency etc., but of course this changes with every benchmarking objective and with every system configuration. DataBench was envisioned map the particular stack of technologies being used and identify the touchpoints to easily assess

Q3. What did you learn in performing a comparative analysis of existing benchmarking initiatives and technologies?

Confucius said if you can describe something you can measure it. In DataBench we believe the same thing and the first step is to describe or in our terms classify the processes that are being executed in an organization pipeline. DataBench has shown us that once classified, processes can be mapped to blueprints and thus quickly and effortlessly compared to similar organizations Big Data and AI pipelines.

Q4. Were you able to provide evidence-based methods to measure the correlation between Big Data Technology (BDT) benchmarks and the organisation’s business benchmarks?

It should be pointed out that there is still much work to be done. Although we have published (ref.) the correlations, the empirical sample of real life benchmarking done on tools like the DataBench toolbox are still statistically insignificant and require much larger datasets to be considered empirically and allow for meaningful comparisons.

Q5. Were you able to measure return on investment (ROI) as well?

Return on investment is cited by companies interviewed as a primary reason for doing this. The econometric research to demonstrate ROI is an task being carried out in IDC.

Q6. One of the output of the project is the DataBench Framework. What is it?

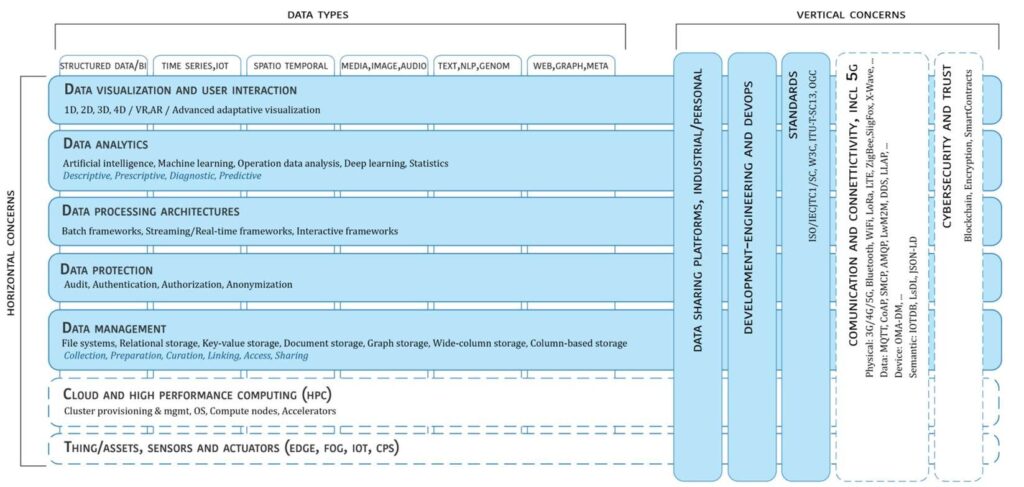

The DataBench Reference framework, shown below, serves as common reference framework to locate Big Data technologies on the overall IT stack.

It is structured into horizontal and vertical elements.

- Horizontal elements cover specific aspects along the data processing chain, starting with data collection and ingestion, reaching up to data visualization. It should be noted, that the horizontal elements may be executed in different physical layers.

- Vertical elements address cross-cutting issues, which may affect all the horizontal concerns. In addition, verticals may also involve non-technical aspects (e.g., standardization as technical concerns, but also non-technical ones).

Figure 1 DataBench Reference Model

Q7. What kind of metrics did you define for a BDT assessment?

We have examined BDT metrics and their assessment across the dimensions of the framework depicted above. More detail for any of the elements can be found in Analytics and Processing, and Data Management documentation at: https://www.databench.eu/public-deliverables/

Q8.You also produced a DataBench Toolbox. What is it? What is it useful for?

The databench toolbox is a one-stop shop for Big Data benchmarking. It is the central entry point to the DataBench results and provides a web based portal access to the framework of reference and knowledge about benchmarking and “tools” made available by the project. It provides different services for different types of users including Technical users, Business users and Benchmark providers. Technical Users can find and eventually deploy and execut benchmarks to evaluate technical indicators of Big Data systems, components or applications. Business users are typically interested in business benchmarks rather than performing a technical benchmarking task. They are usually interested in the underlying business implications of selecting a Big Data system and how this choice influence their business indicators.

Therefore, they typically would like to find similar cases in their industry, high level architectural blueprints of reference for their domain or use case. They would navigate through the KN catalogue to find the right answers. etc. Benchmark Providers are people or organisations that would like to add or manage their own technical benchmark in the benchmark catalogue of the Toolbox. They are typically developers or providers of Big Data benchmarks. They can add or update benchmarks to the Toolbox, and ultimately to declare and provide the automation mechanisms to enable the deployment and execution from the Toolbox.

Q9. Now that the project is finished, can the results produced DataBench Project be used by interested parties?

Yes several companies, research initiatives and trade and technology clusters are already using DataBench. Private consultancies are preparing to provide customization and industrial deployment plans. A look at the community Community – DATABENCH gives highlights of a few of the companies involved.

Q10. Who was involved in the DataBench Project?

DataBench was coordinated by IDC, a global leader in technology market research and analytics but included seven partners from both the private and the public sector from five different Member States (Bulgaria, Germany, Italy, Slovenia and Spain) and one EFTA country (Norway) pulling together a set of strong complementary skills in research, development and innovation, while representing a variety of sectors including industry, research and academia.

Resources

- Position paper: Relating Big Data Business and Technical Performance Indicator

- DataBench: Evidence Based Big Data Benchmarking to Improve Business Performance

- Tutorial on Benchmarking Big Data Analytics Systems

DataBench Toolbox Architecture

Evidence Based Big Data Benchmarking to Improve Business Performance