Sentiment Analysis using Twitter API and RoBERTa model

By Stefanos Nikolopoulos

Senior Data Scientist at Pfizer

Sentiment analysis is a powerful tool that can help businesses, organizations, and individuals understand how people feel about a particular topic or brand. One of the best ways to gather sentiment data is through social media, and Twitter is one of the most popular platforms for this task. In this article, we’ll take a closer look at how you can use the Twitter API and the RoBERTa model to conduct sentiment analysis on tweets.

Twitter API

First, let’s talk about the Twitter API. This is a tool that allows developers to access tweets, hashtags, and other information from Twitter in real-time. This means you can use the API to gather a large amount of data quickly and easily. To use the Twitter API, you’ll need to create a developer account and get an API key. Once you have the key, you can use it to access tweets that match certain criteria, such as keywords or hashtags.

To access the Twitter API, you need to create a developer account on the Twitter Developer platform, which can be found at https://developer.twitter.com/en/apps. Once you have created an account, you can create a project and an app, which will provide you with the necessary credentials, such as the API key and API secret key, to connect to the API. To use the Twitter API, you will also need to have a Twitter account and be logged in. You will need to agree to the Twitter Developer Agreement and Developer Policy. There are different levels of access and usage limits to the Twitter API, so you’ll want to consider your use case and select the appropriate plan that fits your needs.

RoBERTa model

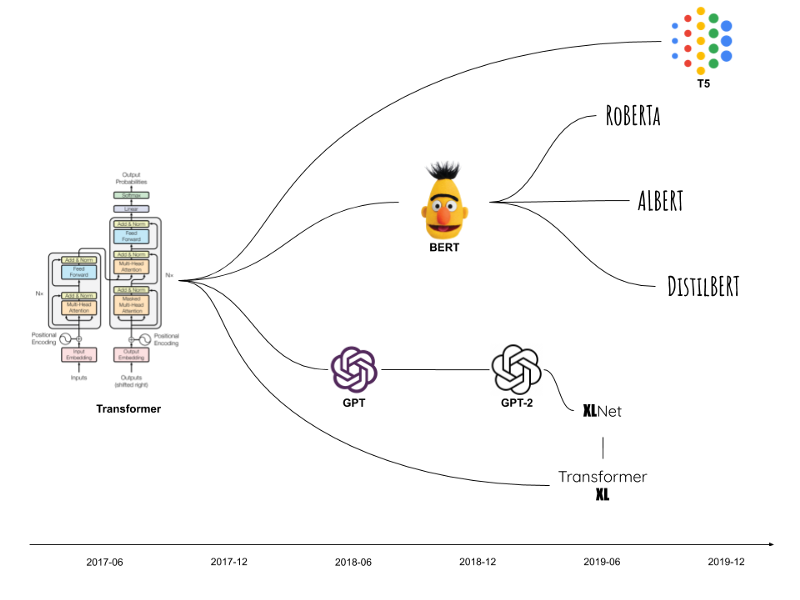

RoBERTa (Robustly Optimized BERT Pre-training) is a pre-trained language model developed by Facebook AI, which is particularly useful for sentiment analysis. The model is based on the transformer architecture and it’s an extension of the popular BERT (Bidirectional Encoder Representations from Transformers) model, with a number of changes and improvements aimed at increasing its performance.

The key improvements in RoBERTa make it particularly well suited for sentiment analysis. One of the main advantages is that RoBERTa is trained on a much larger dataset, which includes more than 160GB of text data, compared to BERT’s 34GB. This allows RoBERTa to have a much larger amount of knowledge and context to draw from when processing text. This is particularly important in sentiment analysis where the context of the text is crucial to understand the sentiment expressed.

Another improvement is the use of dynamic masking, which means that during training, the model is presented with a random subset of the tokens in each input sentence, rather than just a fixed set of tokens as in BERT. This allows RoBERTa to learn from a more diverse set of examples, and can make it more robust to changes in input data, which is important when dealing with social media text that can be informal, and sometimes inconsistent.

RoBERTa also uses a technique called “No-Mask-Left-Behind” (NMLB), which ensures that all tokens are masked at least once during training, whereas BERT uses a technique called “Masked Language Modeling” (MLM) which only masks 15% of the tokens. This leads to a better representation of the input sentence and a more accurate sentiment analysis.

The model can be fine-tuned for sentiment analysis, and it has shown state-of-the-art results in many benchmarks, making it a powerful tool for this specific NLP task. To learn more about RoBERTa and fine-tuning, you can refer to the following links:

https://huggingface.co/docs/transformers/model_doc/roberta

https://github.com/facebookresearch/fairseq/tree/main/examples/roberta

https://arxiv.org/abs/1907.11692

Sentiment Analysis of tweets

To conduct sentiment analysis using the Twitter API and the RoBERTa model, you’ll first need to gather a large amount of tweets that match your criteria. Once you have the tweets, you can use the RoBERTa model to analyze the text and determine the sentiment. This process can be automated, so you can gather and analyze large amounts of data quickly and easily.

The code below connects to the Twitter API using the Tweepy library and searches for tweets containing the keyword “Google”, using a pre-trained RoBERTa model from the transformers library for sentiment analysis. The code then calculates the positivity, neutral and negativity score for each tweet and prints the text of the tweet and the scores for the sentiment analysis.

from transformers import AutoTokenizer, AutoModelForSequenceClassification

from scipy.special import softmax

import tweepy

# Credentials

API_KEY = ‘YOUR_API_KEY’

API_SECRET_KEY = ‘YOUR_SECRET_KEY’

# Establish Connection

auth = tweepy.OAuth1UserHandler(

API_KEY ,

API_SECRET_KEY

)

# Search tweets by keyword until the desired date

keyword = “Google”

date = ‘2023-01-20’

api = tweepy.API(auth)

tweets = api.search_tweets(q = keyword, tweet_mode = “extended”, until = date)

# Load RoBERTa model

tokenizer = AutoTokenizer.from_pretrained(“cardiffnlp/twitter-roberta-base-sentiment”)

model = AutoModelForSequenceClassification.from_pretrained(“cardiffnlp/twitter-roberta-base-sentiment”)

# Use the model on each tweet to perform sentiment analysis and seperate scores for positve, neutral and negative

labels = [‘Negative’, ‘Neutral’, ‘Positive’]

for tweet in tweets:

try:

inputs = tokenizer(tweet.retweeted_status.full_text, return_tensors=“pt”)

classification = model(**inputs)

scores = classification[0][0].detach().numpy()

scores = softmax(scores)

print(“Tweet: “, tweet.retweeted_status.full_text)

for i in range(len(scores)):

l = labels[i]

s = scores[i]

print(l, s)

except AttributeError:

print(tweet.full_text)

print(“===================================================================”)

Conclusion

In conclusion, the combination of the twitter API and RoBERTa language model can be a powerful tool for sentiment analysis on twitter, providing accurate and reliable results, and allowing businesses and researchers to gain a deeper understanding of public opinion on a wide range of topics.

Even sentiment analysis is a powerful technique, one of its main drawbacks is its tendency to produce unreliable or even biased results. This can occur when the training data used to train the model is unbalanced or contains biases, which can lead to the model having a skewed understanding of what constitutes positive or negative sentiment.

………………………………………………………………………………………………………………………………………….